- Gerry FP's Thought Lab

- Posts

- Why Traditional ML Tools Doesn't Work for Building GenAI Applications

Why Traditional ML Tools Doesn't Work for Building GenAI Applications

Before diving into this discussion, let me clarify the terminology used in this article:

Traditional ML app: ML apps for solving ranking, regression, and classification problems.

GenAI app: ML apps that are built using LLMs (Large Language Model).

Recently, I’ve been reflecting on whether building GenAI applications differs significantly from traditional ML applications. Having experience developing both in big tech companies and startups, I’ve had mixed feelings. Building LLM-based applications feels novel yet nostalgic. This got me wondering: do we really need a new set of GenAI operations, or can we rely on existing ML tools built for traditional ML?

My conclusion? We need purpose-built GenAI tools due to differences in maturity, complexity, and the significance of various components. Here’s why:

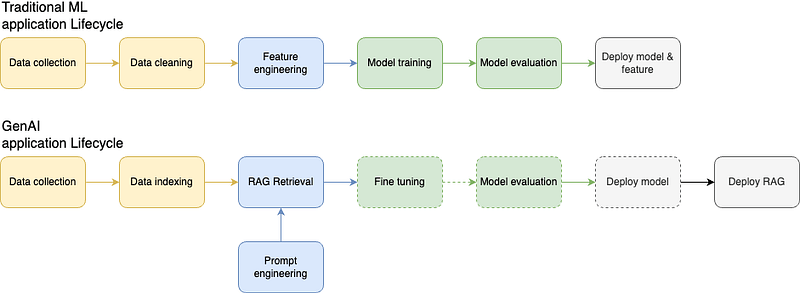

Fig 1: Traditional ML vs GenAI app lifecycle

Lifecycle Differences

At a high level, the life cycles of traditional ML and GenAI applications look similar. However, a closer inspection reveals notable distinctions. Both rely on similar terminology and technologies — VectorDB, evaluation, retrieval, embeddings, etc. but their roles differ vastly. For instance, VectorDB was often a “nice-to-have” in traditional ML, while in GenAI, it’s arguably the second most critical component after the foundation model itself. The introduction of prompt engineering and RAG (Retrieval-Augmented Generation) is a defining feature of GenAI. While embedding retrieval isn’t new to traditional ML, its scale and impact in GenAI applications are far greater.

Dataset Preparation

While dataset preparation follows similar principles in both domains, the types of data vary.

Traditional ML: Structured data (e.g numbers, categories).

GenAI: Predominantly unstructured data (e.g text, images, audio, video).

This shift in data types significantly influences downstream processes like feature engineering and evaluation.

Feature Engineering vs. RAG Retrieval

Feature engineering is the cornerstone of traditional ML. Engineers and data scientists invest significant effort into crafting and maintaining features to optimize model performance. This step affects bias, responsiveness, and overall accuracy.

In contrast, feature engineering as a concept is largely absent in GenAI. Here, the primary inputs are:

Query Design (Prompt Engineering)

In-Context Learning (ICL): Retrieval from a RAG system.

While traditional ML applications occasionally leverage retrieval systems, their complexity pales compared to those in GenAI, necessitating a new approach to design and scale.

Model Training and Fine-Tuning

While both traditional ML and GenAI may involve training and fine-tuning, the defining factor in GenAI is model size. Foundation models are massive, pushing the limits of existing training architectures. This necessitates scalable infrastructure designed specifically for these tasks.

Model Evaluation

Evaluation is the final and most crucial step before deployment. The evaluation process diverges due to differences in data types:

Traditional ML: Relatively straightforward, with clear metrics for success.

GenAI: Abstract inputs and outputs make it challenging to define right and wrong.

This ambiguity often requires human judgment to assess model performance, leading to a less standardized evaluation process compared to traditional ML.

While many concepts and technologies from traditional ML are reused in GenAI, their significance and the problems they address operate at a different scale. Building a GenAI application with traditional ML tools is possible but impractical and inefficient. These applications solve fundamentally different problems, underscoring the need for specialized tools tailored to GenAI.

The emergence of new ML tools for GenAI is not just a trend — it’s a necessity. I anticipate this space will evolve significantly, especially as many continue to struggle with productionizing models.

Reply